AI Skunkworks is all about creating cose effective tools for any user. From a 5 Person team to a 25,000 user base needing tools for AI, we have alot to offer.

AI Governance by AI Skunkworks is our flagship tool that any business can employ and start becoming compliant with EU AI, Nist AIM, ISO 42001 / ISO 27001 and meet your training requirements.

AI Guard Manager is our enterprise solution and proxy for traking and light to medium level guardrails. This solution is designed for scaled deployment and security and risk in mind.

AI Developer Guard Manager allows your developers to create API integrations and apply Guard Rail rules just like NEMO and other single use products. As part of the guard manager suite of products, you also get DLP, WAF / API Guard infront of connections attempting to connect to your endpoints.

Product Portfolio

Table

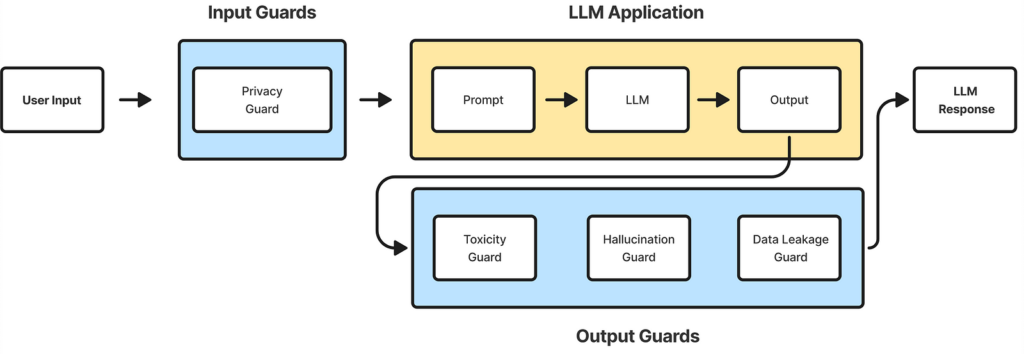

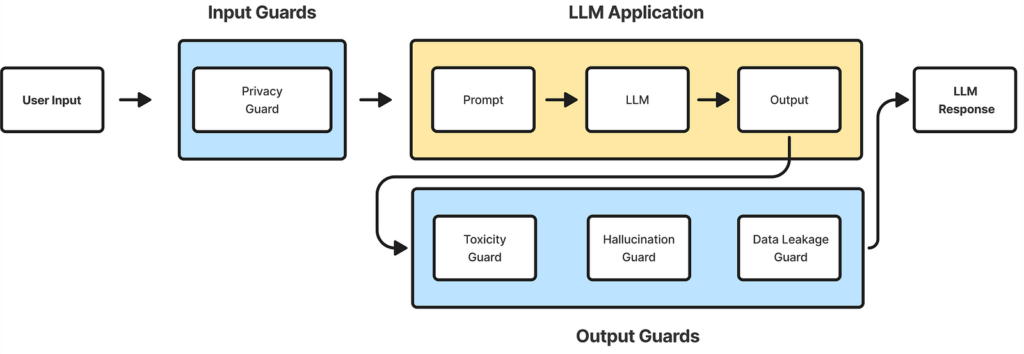

Production AI Firewall and Guradrail Solution. Like or developer solution you can secure the frontend and the backend at the same time. Each API key can have its own guardrails and persona. We use a NEMO like guardrail to protect prompts and llm resources from exploits and vulnerabilities.

Guard developer allows developers to test custom security rules against many bots or many LLMs hosted locally or in the cloud through common API connections. Alot of our users are using AI Foundry from Azure, hosting a GTP LLM (gtp-4 or gpt-5) as just an example. Then they build a chat bot frontent or use our provided dev only version. Most developers are creating code with in an hour and testing chatbots with in a couple hours.

1. AI Guard Manager

Enterprise AI Governance Platform

AI Guard Manager is a comprehensive governance and safety platform designed to control how AI systems are used inside an enterprise.

It allows organizations to manage AI systems with the same rigor used for security, risk, and compliance programs.

Core Capabilities

- AI use case inventory and lifecycle tracking

- Persona and agent management

- Guardrail policy enforcement

- LLM testing and red-team simulation

- AI risk scoring

- Compliance evaluation (NIST AI RMF, EU AI Act)

- AI activity monitoring and audit logging

What it Solves

- Lack of visibility into enterprise AI usage

- Uncontrolled AI deployments

- Regulatory compliance challenges

- AI misuse and unsafe outputs

Target Users

- Information Security

- AI Governance Teams

- Risk & Compliance

- Model Risk Management

- Enterprise Architecture

2. PromptWatch

Real-Time AI Prompt & Response Monitoring

PromptWatch analyzes prompts and responses generated by AI systems to detect unsafe behavior, data exposure, and policy violations.

It acts like a SIEM for AI conversations.

Core Capabilities

- Real-time prompt analysis

- Response monitoring

- sensitive data detection

- prompt risk scoring

- AI activity dashboards

- behavioral analytics

- integration with Splunk and log pipelines

What it Solves

- Prompt injection attacks

- sensitive data leakage to AI systems

- shadow AI usage

- lack of monitoring of AI activity

Target Users

- Security Operations (SOC)

- Insider Risk Teams

- Data Loss Prevention teams

- AI governance programs

3. Prompt Firewall (Advanced)

AI Prompt Threat Prevention Engine

The Prompt Firewall evaluates prompts before they reach an AI model to block high-risk requests and enforce enterprise guardrails.

It functions like a Web Application Firewall for AI systems.

Core Capabilities

- prompt risk classification

- regex threat pattern detection

- jailbreak detection

- prompt blocking or redaction

- customizable rule engine

- simulation mode for testing prompts

What it Solves

- AI jailbreak attempts

- data exfiltration through prompts

- unsafe instructions

- attempts to bypass AI guardrails

Target Users

- AI security engineers

- red teams

- enterprise AI platform teams

4. AI Compliance Engine

Automated AI Compliance Scoring System

The AI Compliance Engine evaluates AI responses against regulatory and ethical frameworks.

It helps organizations prove their AI systems operate responsibly.

Core Capabilities

- compliance scoring (0-100 scale)

- transparency scoring

- bias detection

- explainability checks

- regulatory mapping

- automated self-assessment

Supported Frameworks

- NIST AI Risk Management Framework

- EU AI Act

- internal enterprise policies

- ISO-style governance controls

What it Solves

- regulatory compliance gaps

- lack of explainability in AI systems

- difficulty proving responsible AI use

5. AI Persona & Agent Simulator

AI Behavior Testing Environment

This system allows organizations to simulate AI agents and personas to test behavior before deployment.

Core Capabilities

- persona YAML configuration

- agent simulation environment

- guardrail testing

- prompt test libraries

- automated evaluation scoring

- full stack AI testing

What it Solves

- unpredictable AI behavior

- unsafe agent deployment

- lack of pre-production testing

6. Prompt Risk Analyzer

AI Prompt Risk Scoring System

The Prompt Risk Analyzer evaluates prompts for potential security, compliance, or ethical risks.

Core Capabilities

- pattern-based detection

- prompt classification

- risk scoring engine

- export of high-risk prompts

- customizable threat libraries

- regulatory tagging (GDPR, PHI, etc.)

What it Solves

- sensitive data prompts

- insider misuse of AI

- intellectual property leakage

- compliance violations

7. AI Inventory & Use Case Tracker

AI Lifecycle Governance Platform

This system tracks all AI systems inside an organization, including risk ratings, approvals, and lifecycle status.

Core Capabilities

- AI use case registry

- ownership tracking

- regulatory classification

- approval workflows

- lifecycle management

- risk scoring

What it Solves

- unknown AI deployments

- regulatory reporting challenges

- lack of AI governance processes

8. LLM Security Gateway

Secure API Gateway for AI Systems

The LLM Security Gateway sits between applications and AI models to enforce security, logging, and governance controls.

Core Capabilities

- prompt inspection

- output guardrails

- policy enforcement

- compliance routing

- logging and audit trail

- multi-model support (OpenAI, Azure OpenAI, local models)

What it Solves

- uncontrolled AI API usage

- inconsistent guardrails

- lack of AI request monitoring

9. AI Red Team Testing Framework

Automated LLM Security Testing

This framework uses tools such as PromptFoo and custom testing systems to simulate attacks against AI models.

Core Capabilities

- jailbreak testing

- prompt injection simulation

- guardrail validation

- automated scoring

- adversarial prompt libraries

What it Solves

- AI model vulnerabilities

- unsafe prompt responses

- lack of security testing before deployment

10. AI Governance Reporting & Analytics

AI Risk Intelligence Dashboard

This module provides dashboards and reporting for enterprise AI risk programs.

Core Capabilities

- AI risk metrics

- prompt risk trends

- guardrail violations

- compliance scores

- security alerts

- executive dashboards

What it Solves

- lack of visibility for executives

- difficulty reporting AI risk

- fragmented AI monitoring tools

AI Skunkworks Platform Vision

These products combine into a complete AI security and governance ecosystem:

AI Guard Manager

│

│

├── Prompt Firewall

├── PromptWatch Monitoring

├── AI Compliance Engine

├── Persona & Agent Simulator

├── Prompt Risk Analyzer

├── AI Inventory Tracker

├── LLM Security Gateway

└── AI Red Team Framework

Together they allow organizations to govern the entire AI lifecycle:

AI Idea

↓

Use Case Registration

↓

Risk Assessment

↓

Guardrail Design

↓

Testing & Red Teaming

↓

Deployment

↓

Monitoring

↓

Compliance Reporting